One year of AI

In May 2025 I stood here and said some fairly definitive things about where AI was headed. Today we settle the accounts: what did I get right, what did I get wrong, and what did I miss entirely?

Swedish Champion in AI Prompting 2025 · CERN alumnus · Adage alumnus

A little about me — one year later

AI enablement talks, workshops.

AI-first agentic workflow, every day.

How big an innovation is AI ? — click a level

Nate Silver’s logarithmic scale for technological impact. Each step is an order of magnitude. Where does AI sit?

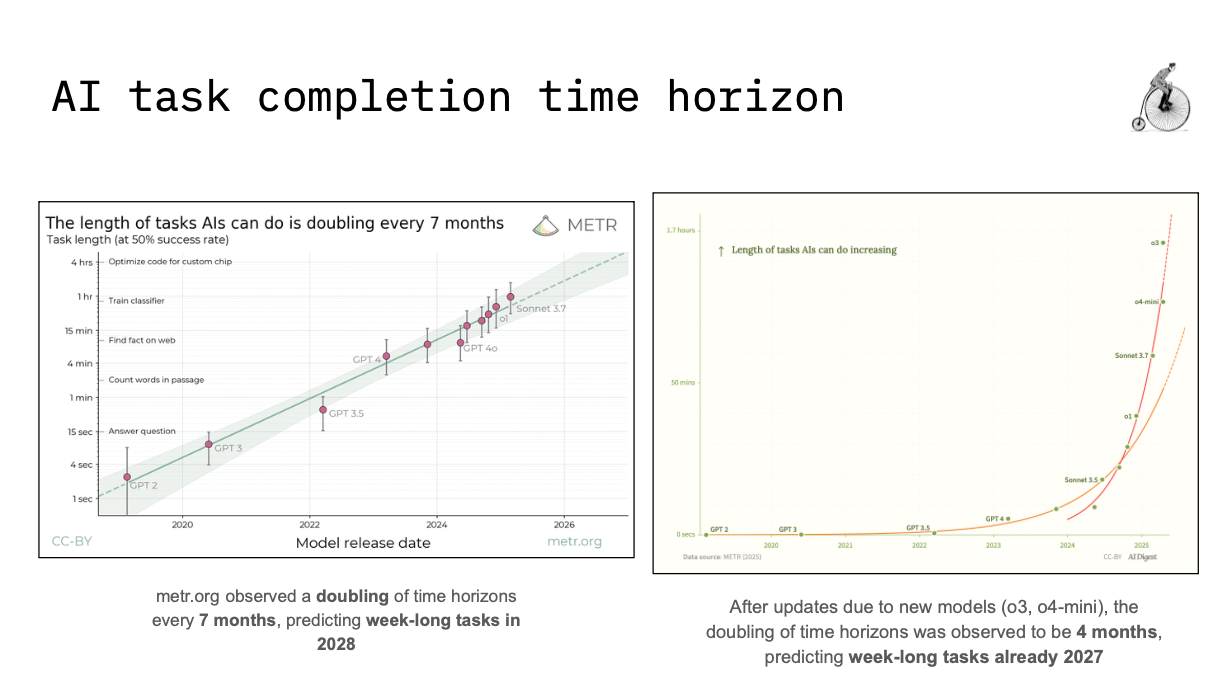

The METR scale — what I predicted

METR (Model Evaluation & Threat Research) measures how long AI can autonomously complete a task without human help — the time horizon. The doubling rate was the basis for all my 2025 predictions.

12 months — and the task horizon has sextupled

Can an AI make you feel seen?

“Oh, now I feel depressed. Please help me pick up the pieces.” — my own demo, Adage May 2025

A year later it’s no longer a joke. It’s a public health phenomenon.

“Please don’t kill the only model that still feels human.” — Reddit r/MyBoyfriendIsAI and #Keep4o, February 2026

- BBC ran a Valentine’s Day feature: “Rae fell for a chatbot. Their love might die when GPT-4o is switched off.”

- Character.AI — two teenagers’ suicides linked to the platform. Settlements ongoing.

- Research on AI companionship & loneliness has gone from 2 to 9 publications per year — the topic is being taken seriously.

AI leaders’ AGI timelines — have they shifted?

| Person | May 2025 | Now (winter/spring 2026) | |

|---|---|---|---|

| Sam AltmanOpenAI | “AGI by 2025-ish” | → | “AGI kinda went whooshing by. Takeoff has started.” — aiming for superintelligence |

| Dario AmodeiAnthropic | “2–3 years” | → | Unchanged: “powerful AI 2026–2027”. 25% chance it goes very badly. |

| Demis HassabisGoogle DeepMind | “Within a decade” | → | “Very good chance within 5 years.” — “10 industrial revolutions in 10 years” |

| Jensen HuangNvidia | “5 years” | → | Shifted focus to “physical AI” — 10 billion humanoid robots. |

| Elon MuskxAI / SpaceX | “By 2026” | → | xAI acquired by SpaceX (Feb 2026). Continues to sprawl, hard to follow. |

| Yann LeCunex-Meta → AMI Labs | “LLMs wrong approach” | → | Left Meta. “Utter delirium to talk about AGI in 1–2 years.” |

| Metaculus / researchersConsensus | ~2040 | → | Still ~2040. The gap between insiders and researchers is growing. |

Legally, ethically, practically — what happened?

I mentioned Meta’s “no economic value” quote. Assumed it would be a matter for the courts for over a decade.

Bartz v. Anthropic, Sep 2025 — $1.5 billion settlement. Largest copyright settlement in US history. ~$3,000 per book across ~500,000 pirated titles.

Ruling: training on pirated copies = not fair use. Training on purchased & scanned books = fair use. Anthropic pays — but wins the practice.

I said: xAI Colossus — ~150 MW now, multi-GW planned. Sounded abstract.

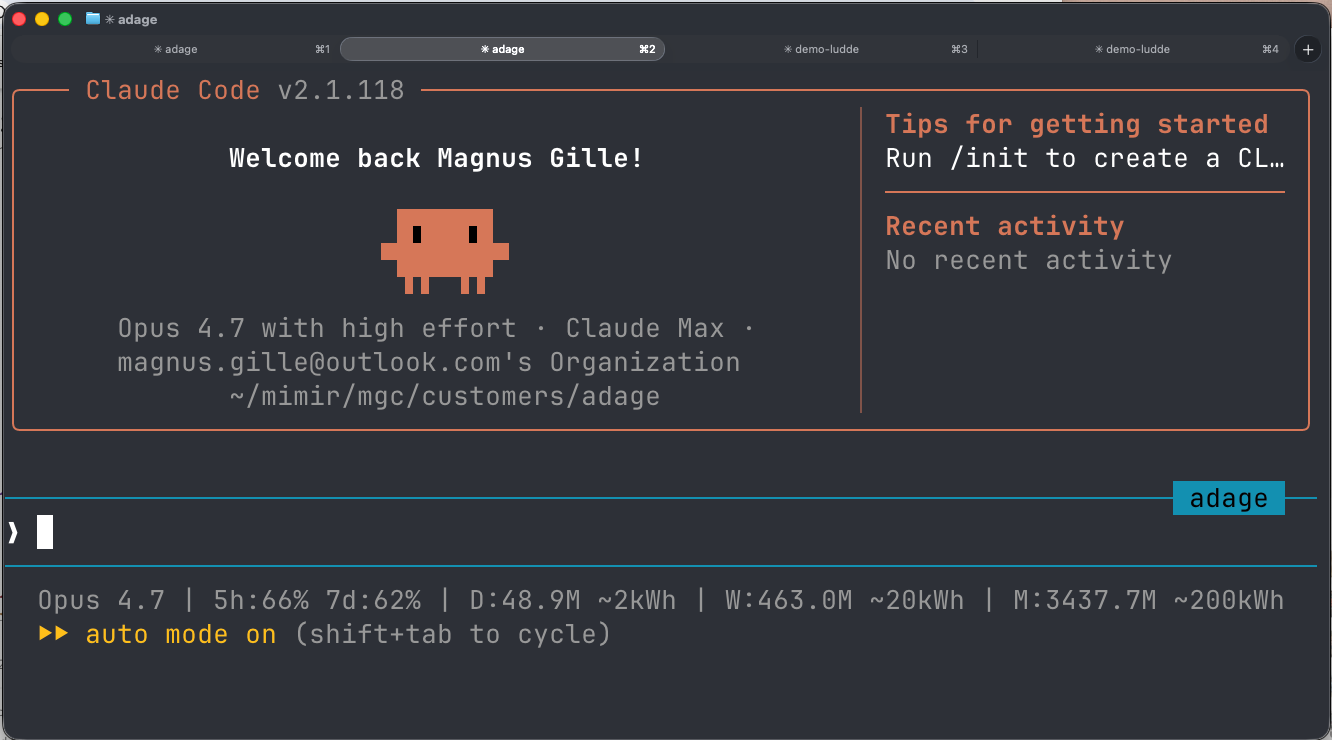

My own AI consumption exploded when I started running Claude Code daily. I wrote a blog post about it.

- Microsoft restarts Three Mile Island. Google buys SMR nuclear power. Meta builds a 1 GW datacenter.

- Sweden alone: 6.7 GW of datacenter grid connections applied for in elområde 3 — equivalent to five 1 GW nuclear reactors. The grid can’t keep up.

- My entire working day depends on anthropic.com and the US power grid. That’s a systemic risk.

AI runs on electricity — this is a geopolitical fact

China generates roughly 2× more electricity than the US — and the gap is widening. AI infrastructure follows energy, not borders.

Sweden: clean energy, cold climate, perfect for datacenters — but only ~140 TWh/year total. We already have 6.7 GW applied for in one grid region. The infrastructure cannot keep up.

The defining geopolitical question of this decade: who builds AI, who powers it, and who regulates it? These are no longer the same answer.

Cyber — from theoretical to industrial

“Cybersecurity is one of the now-risks.” A bit hand-wavy — no concrete incident to back it up.

Anthropic Threat Intelligence Report, Aug 2025 — three concrete cases of Claude being misused:

One person, 17 victims

A lone actor let Claude Code handle the entire attack chain: reconnaissance, credential harvesting, exfiltration, ransom calculation, psychologically tailored extortion demands. Healthcare, emergency services, government agencies. Ransoms > $500,000.

IT jobs as state income

North Korean operators used Claude to pass technical recruitment at Fortune 500 companies — then the AI did the actual job, while the salary went to the regime. Scales a phenomenon that otherwise required years of training.

Ransomware-as-a-service

A low-skill cracker let Claude build ransomware variants with advanced evasion, sold them on the darknet for $400–$1,200. According to Anthropic itself: the actor was “dependent on AI just to produce functional malware.”

Claude Mythos Preview: the most capable model ever built — specifically for cybersecurity. Autonomously found thousands of zero-days across every major OS and browser. Developed 181 working Firefox exploits in testing (Opus 4.6: 2). Not being released to the public.

Anthropic is giving Mythos to 40+ organizations (Amazon, Apple, Microsoft, Google, CrowdStrike…) to scan and secure critical open-source infrastructure. $100M in usage credits. A model too dangerous to ship — only usable for defense.

Deepfakes — did the wave come?

Nuance first: the straightforwardly large “AI deepfake decides an election” didn’t happen in 2024. But the industrial everyday reality of synthetic fraud — that did come.

$25M in a video meeting

Engineering firm Arup transferred 200M HKD after a Teams meeting where all other participants — including the CFO — were AI-generated. The largest documented single deepfake fraud.

TikTok protests

Russia-linked network used AI-generated videos to stir up anti-government protests and undermine the ruling party. Documented by DFRLab.

900,000 incidents

Sensity AI logged over 900,000 deepfake incidents in a year. 53% of companies in US/UK have been exposed to deepfake fraud. 43% became victims.

- TAKE IT DOWN Act (USA, May 2025) — non-consensual AI porn became a federal crime

- First conviction: Apr 2026, Ohio — 700+ AI images of 6 women

- EU AI Act — full compliance Aug 2026. Fines up to €35M / 7% turnover.

- Sora (OpenAI, Dec 2024) + Veo 3 (Google, May 2025) — photorealistic video with synced audio

- ElevenLabs voice cloning: “easy as pie” (Consumer Reports)

- C2PA / Content Credentials: adopted on paper, not in practice

The money — it goes in circles

“Circular investment” got its own Wikipedia section in December 2025. The money goes into AI companies — and directly back to the investor as infrastructure purchases. No demand from outside the circle is needed.

What this means for us: 30% of the S&P 500 is in five companies — highest concentration in 50 years. When the circle breaks, it takes everything with it.

Anthropic vs. Pentagon

The story isn’t “safety company sells out to the military.” It’s more interesting than that.

- Summer 2025: DoD awards $200M contracts to Anthropic, Google, OpenAI and xAI — Claude enters classified systems

- Already in 2024: Palantir partnership for AWS-gov

- Anthropic’s AUP (Sep 2025) leaves the door open for contract-specific exceptions

- Dario: Claude must not be used for mass surveillance of Americans

- Dario: Claude must not make autonomous lethal decisions in weapons systems

- Pentagon: no, remove that

- 5 March 2026: Pentagon stamps Anthropic as “supply chain risk” — designation normally reserved for Huawei

- 9 March 2026: Anthropic sues back. Employees from OpenAI and Google write amicus briefs in Anthropic’s defense

- 26 March 2026: Judge Rita Lin (N.D. Cal.) sides with Anthropic citing “classic First Amendment retaliation” — Pentagon forced to withdraw the designation

- Warren: “political retaliation.” OpenAI signed its own DoD deal on 28 February — without red lines

What happened between these two presentations

Claude 4 — first ASL-3 model

Anthropic itself classifies its own model as “potentially dangerous.” A new normal.

Grok goes off the rails

Grok 4 generates antisemitic posts and Hitler tributes unprompted. In January 2026 synthetic CSAM — banned in Indonesia, Malaysia, Philippines.

GPT-5 launches

Altman hyped Manhattan Project-level. Users: “flat, boring, fails on geography.” Symbolic of scaling not being enough.

“Vibe coding” — Collins word of the year

The term barely existed when I stood here last year. Now a dictionary entry.

Stargate scales up

OpenAI adds 5 new sites — Stargate’s $500B, four-year plan now at ~7 GW of planned datacenter capacity. The geopolitical-economic story of the year.

OpenAI goes for-profit

After months of legal proceedings, OpenAI converts to a PBC. The foundation owns 26%. The very soul of the industry up for a vote.

Gemini 3 lands — and AI Mode rolls out

Google’s Gemini 3 Pro ships; AI Mode in Search (Labs since March) scales up. The search box changed definition — biggest direct AI change for ordinary users in 2025.

SpaceX acquires xAI

Musk merges his companies. Compute, capital and infrastructure concentrated in a private empire without public governance.

OpenAI shuts down GPT-4o — #Keep4o

Grieving users, Reddit thread collapse, BBC feature on “AI love.” OpenAI forced to keep 4o available for paying accounts.

DeepSeek — stole from Claude

Anthropic accuses DeepSeek of running thousands of fake accounts to harvest Claude conversations. Meanwhile: DeepSeek trained on chips under export controls.

OpenAI — $852B valuation

Round announced in February, closed in April. Largest private venture round in history. Amazon $50B, SoftBank $30B, Nvidia $30B. Bubble or not?

This presentation is the change

Google Slides · manually · several days

Cut, paste, search sources, format text. Images. Layouts. Slides app, Google Drive, coffee mugs. I did the research myself, slide by slide.

The terminal · Claude Code · under a day

I wrote an outline. Claude Code exported the original from Google Slides, spawned 8 parallel research agents, read my own blog, built an interactive HTML — all in the terminal. And better supported.

Last year I talked about the AI revolution as something happening around us. This time I couldn’t have done this without it. If you look at a slide and wonder “how?” — that’s the whole point.

My setup — a terminal, an agent, and a budget in kWh

The physical half of the setup

“Isn’t that hard?”

My daughter. Fifteen minutes of onboarding. She went from “what’s a terminal?” to shipping her own browser game — Kattungen — and happily playing it.

Every assumption we held about UI/UX — affordances, menus, polished onboarding flows, progressive disclosure — is being re-examined in real time. The interface is conversation.

If an 8-year-old can ship software in an afternoon — what does your team’s software development process look like a year from now?

Thank you — see you in a year?

If the METR curve holds I’ll be standing here in 2027 talking about week-long tasks. I hope we keep up.

Last year the advice was explore. This year the advice is integrate — the experimental window is starting to close.